Disclaimer: the blogs express the opinions of their authors and do not reflect the position of the Essex HRC as an institution.

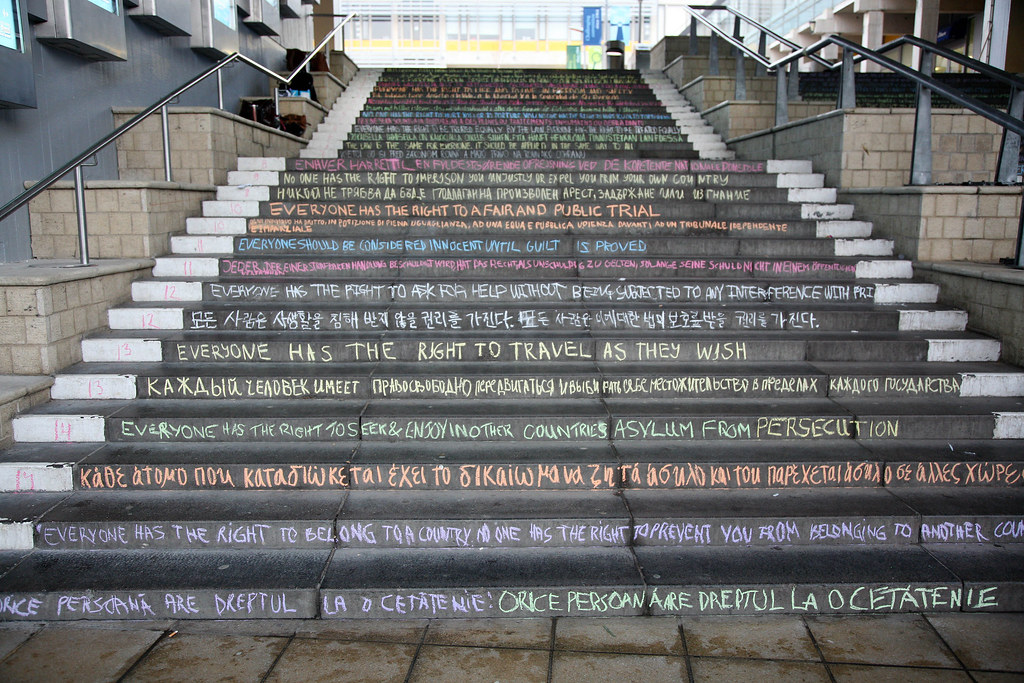

The Essex Human Rights Centre Blog

The Human Rights Centre blog seeks to publish posts relating to the theory and practice of human rights, looking at both contemporary events and longstanding issues in times of peace, instability, and in conflict and post-conflict settings. Our goal is to present a blog that provides interesting commentary of relevance to practitioners, students, academics, and those interested in human rights.

The blog is also your shortcut to see the Human Rights Centre’s past and future activities and events.

Additionally, our RightsCast brings you discussion on a wide range of contemporary and enduring human rights issues from the University of Essex Human Rights Centre.

Bringing together diverse voices from all over the world, we apply a human rights lens to better understand current events, to discuss key issues, and to explore how to achieve social change.

From grassroots movements to major international affairs, join us each week as we talk to the people behind the stories and seek to create a dialogue around the role of human rights in our daily lives.

Navigate our website

Blog

Our Blogs section aims to provide our readers with the latest posts written by the HRC’s members, fellows, and guests. Our entries discuss current global and local affairs and Human Rights

Weekly News Round-Up

Our Weekly News Round-Up platform provides you with the latest news of human rights from around the world on weekly basis and gives a deep dive into a specific event through a small article “In Focus”.

Spotlight

Our Spotlight page regularly features a significant individual or team from the Human Rights community to answer questions put by students from the University of Essex.

Voices Rising: Stories from the Margins

Our Voices Rising: Stories from the Margins section is a platform for essays/stories written from minority perspectives and representing diverse backgrounds.

Event and Projects

Our Events and Projects provides you with an update on our past and future events such as Speaker Series, Summer School, Conferences, and other events.

Who is running the blog?

Our Blog editor since 2019 is Dr. Katya Alkhateeb.